Thermal AI for X

Plant Vision™ allows you to understand everything from the microclimate to the microbiome.

Reach out to us:

contact@plantvision.org

About

“Most people have never seen a vertical farm, but that could be about to change.”

It was all a dream…

After 10 years in NYC Creative Directing for large organizations, CEO Ryan Hooks set out on a journey to San Francisco. Shortly after entering California on a cross country road trip in 2015, he realized how precious and volatile water resources have become. If 70% of the worlds fresh water goes to agriculture, why weren’t more people talking about vertical farming and greenhouses? Quickly he assembled a team, and attracted investors to build a modular hydroponic greenhouse that could be deployed anywhere in the world, starting on Treasure Island.

Shortly after:

Building a greenhouse, hardware, and software, from scratch, is a bit much to take on at once. But with the best team, the green started to grow with the help of computer vision and environmental automation. 95% less water, 2x faster, and Global Seeds Grown Locally facilitated the momentum of a hyperlocal Seed to Chef platform.

2 years into the realm of horticulture.

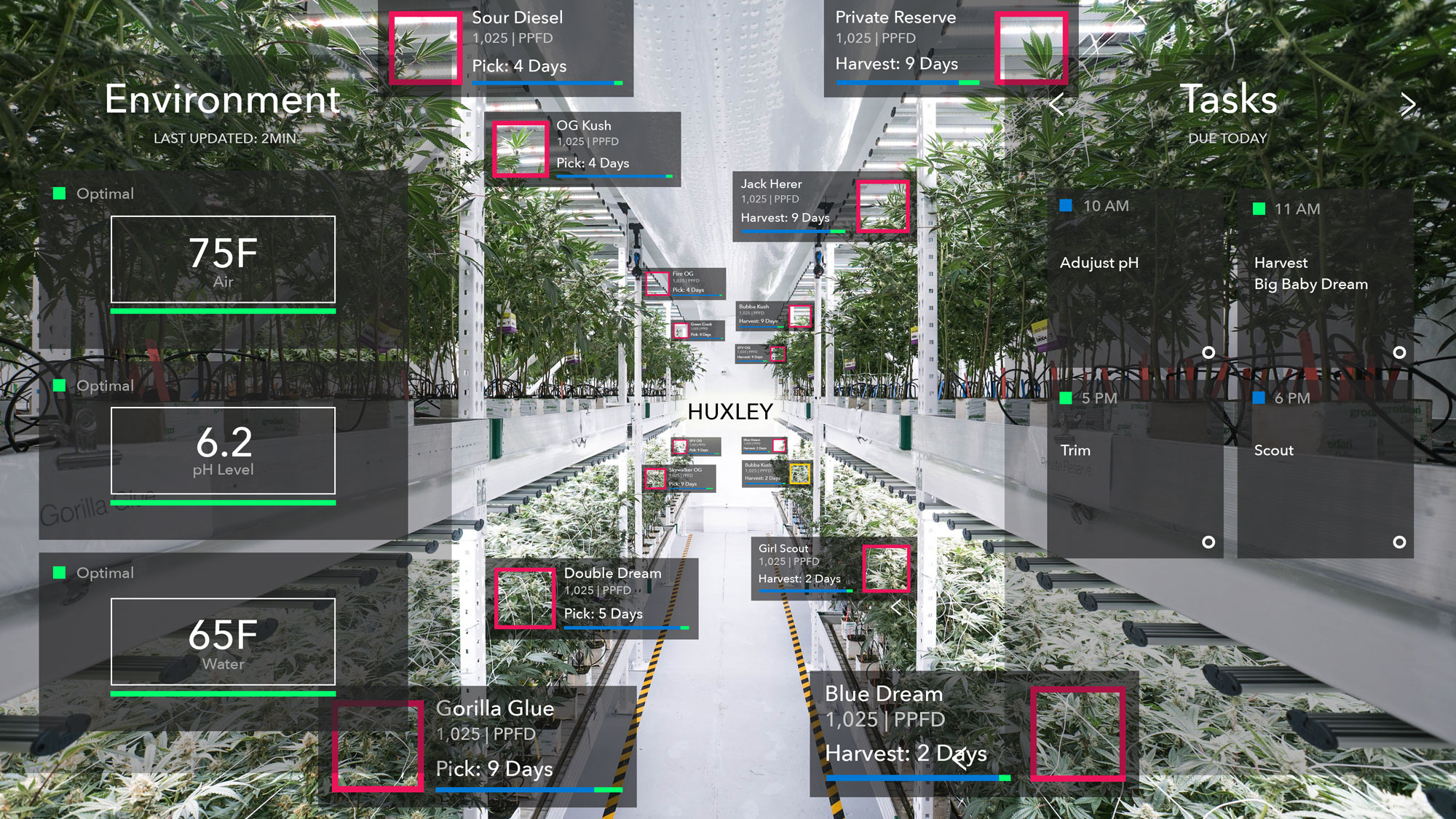

What’s the main bottleneck to the scaling and operating of such efficient, but complex systems? 1) Upfront costs 2) Labor adoption & retention. Could Augmented Reality help create a hands-free OS for growers? Uberizing a new workforce to Green the Galaxy?

Hooks goes to the Netherlands.

What started as a couple of meetings in Europe, lasted for two years (and 30 countries). Following the path of least resistance, he quickly realized that the Augmentation of breeding could provide the best results for feeding 10 Billion humans, as a huge Buckminster Fuller fan, he was looking for the Trim Tab approach… to do the most with the least.

Wageningen.

For over a year, Ryan set on a journey to bring the best minds together to create Augmented Phenotyping with the top plant scientists in the world. Computer Vision has been utilized for Digital Phenotyping for decades, but with the power of GPU’s in the latest smartphones, we finally have the power to Understand Plants via Augmented Reality. Multispectral Augmentation is a new field of study, where the human starts to see in spectrums like Infrared and Ultraviolet, to correlate the nuances of flavor and smell, or to see disease days earlier. This $2.4mil grant from the Dutch government will continue for the next 4 years.

What’s next?

Of the 391,000 species of plants, and 200,000 pollinators, climate volatility is shaking things up 1000x the background rate. Each pollinator sees the world in a different spectrum band, meaning for AI to understand pollination, we as humans need to understand what is so attractive about certain plants to animals, and the relationship that makes that mighty mango taste so darn delicious. As we are approaching the reality of finite resources… Do humans have the tools to adapt to runaway climate change? Do humans have the tools to feed themselves in space? Do humans have the tools to breed food and medicine in different gravitational environments?

Here at Plant Vision, we are building just that.